Semiconductors are the backbone of electronic devices. These chips feature miniature-sized components and are inspected rigorously during every phase of their assembly to ensure their quality. Very often these semiconductors are observed under microscopes using micromanipulators, particularly in situations where failure requires a failure analysis to determine the cause of failure. So, Micromanipulators play a crucial role in testing to ensure the quality of semiconductor devices used in modern electronics. This post discusses how micromanipulators are effectively used in semiconductor device testing.

Micromanipulators: An Overview

Working Principles of Micromanipulators

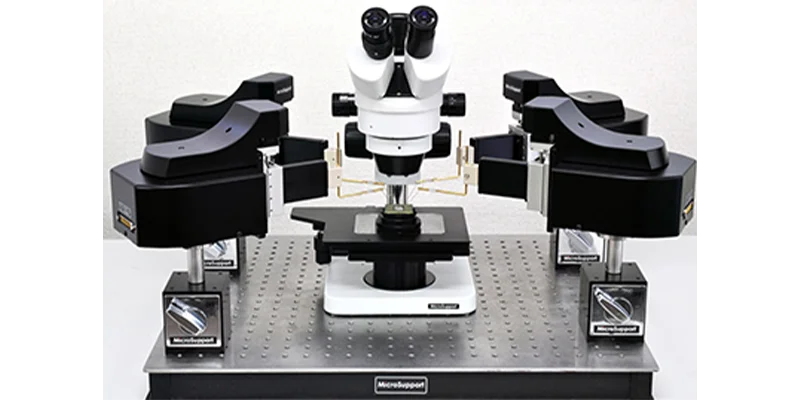

A micromanipulator enables precise manipulation or sampling of objects such as a test coupon, wafer or die. It lets the user physically interact with the sample through its arms with probes. The movement can be controlled manually or electrically. Here, the main part is precise movement of the probe at the required angles with an accuracy of less than a micron. This requires actuators that offer controlled movement with micron and sub-micron level precision. A micromanipulator can be covered for safety reasons and remotely operated with a mouse, where the output can be displayed on a computer monitor.

Benefits of Using Micromanipulators in Semiconductor Device Testing

Here are some benefits micromanipulators offer in semiconductor device testing.

- The sub-micronlevel of positonal control leads to enhanced accuracy and efficiency in testing procedures of wafers with complex circuits.

- Physical interaction in terms of viewing the circuitry and minute components in various cross sections, angles, and more helps yield accurate results in terms of detecting failure and other issues in circuits. This improves throughput in testing processes.

- A micromanipulator can be completely covered and operated remotely which improves safety. The controlled movement of probes reduces the risk of damage to delicate devices.

- These devices enable repeatability in measurements.

- It facilitates enhanced performance during the evaluation of semiconductor devices, which helps in their normal functioning and avoiding product failures and recalls.

- For advanced IC systems that involve air-sensitive devices during the manufacturing process, manipulation and testing can occur in a glovebox or some other air-inert environment.

Applications of Micromanipulators in Semiconductor Device Testing

Here are some application areas of micromanipulators in the semiconductor industry.

- Probing: The actuators in micromanipulators help in positioning probes for electrical characterization.

- Contact manipulation: Micromanipulators are used to manipulate microscopic components during testing, which may include separating certain materials from the substrate or adding new ones. Similarly, wires may be cut as part of the testing process.

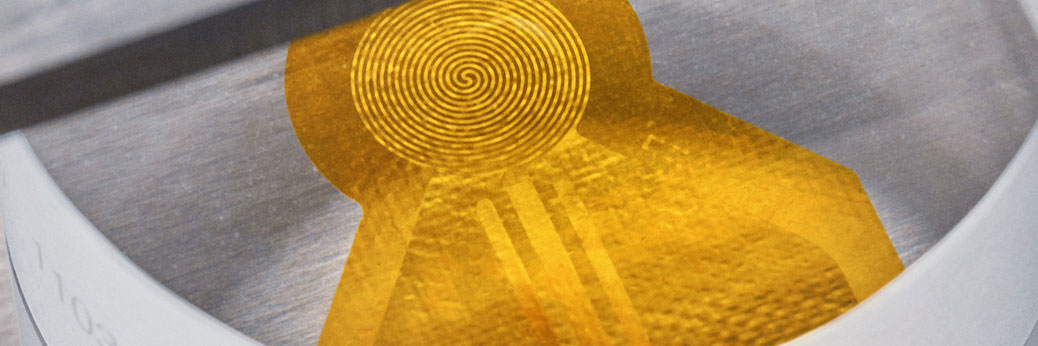

- Failure analysis: Micromanipulators aid in isolating certain components in a wafer which helps analyze device failures by physically interacting with the components with the help of probes. These physical defects can then be transferred to carbon tape or some other substrate for more advanced testing when isolated from the circuit.

- Microfluidics: Micromanipulators are now used to test microfluidic chips. There are microchannels embedded into a microfluidic chip which are connected to the outside environment by the inputs and outputs pierced through the wafer.

- Wafer applications: Aside from wafer-level testing, micromanipulators can be used to test individual dyes. They also find applications in packaging and testing of integrated circuits. This is essential for removing liquids and gases for chips embedded in flow control systems.

If you have any questions about using electrical or manual micromanipulators in your semiconductor application, custom options, or anything else, you can consult Barnett Technical Services. The company is an authorized distributor for various brands of micromanipulator systems, such as Micro Support.